Chapter 1

Natural Information Architecture

Story

Once upon a time, there lived an orange cat named Pippa. As a fellow of the ginger cat-egory, and thus a liminal being, she was equally tame-wild and loved-feared. So when Pippa got stuck on top of an eight-foot step ladder in our basement one evening, my wife and daughter weren’t sure how to help without scaring — or being scarred by — our beloved tangerine chaos goblin.

In the blink of an eye, I lifted an ottoman, Pippa hopped aboard, and I lowered her safe and sound. My wife asked, “how on earth did you think of that?” My answer was “mental models.”

In the somatosensory area of my neocortex, there are models of the weight, size, shape, and feel of our cat and our ottoman, and of my height relative to the ladder. This tacit knowledge, that which I don’t know I know, abides deep underground, buried in my unconscious. So I did not solve the problem through conscious deliberation, and I did not use logic, language, or reason. The synthesis of insight happened in a flash. I acted upon a fleeting glimpse in my mind’s eye.

In our family, I am the designated furniture mover, so I had relevant experience. I knew to use the light, puffy ottoman, not the heavy one with wooden legs. And, relative to most folks, I have low functional fixedness, a cognitive bias that makes it hard to imagine the use of objects for anything but their intended purpose.

Perhaps it’s my English heritage. I do tend to be cheeky and to color outside the circle. Also, as an information architect, I built my entire career upon categorical flexibility, the ability to envision multiple ways of organizing the same information. So, to me, an ottoman is not only furniture — it’s also the bucket on the ladder of a fire truck.

I tell this story of the cat who lived happily ever after to illustrate the banality of creativity. Every day, we use mental models to solve problems creatively. We plug a leak, avoid a fight, find a way, or make a deal. It’s a skill so natural, we don’t know we do it. But as a skill, it’s subject to change.

The thesis of this book is that we can build the skill of creative problem solving by engaging with the organization of ideas in our own minds. The plan is to boost metacognition and creativity by making the invisible visible with information architecture, and to have fun together on the way. But make no mistake, this is a perilous journey into the heart of darkness. To move blocks at the base of the tower of culture is to risk a catastrophic revision of belief. So read on, if you dare.

Mental Models

In The Nature of Explanation (1943), Kenneth Craik first proposed that the mind constructs “small-scale models” of reality that it uses to anticipate events. Since then, this idea of mental models has been studied and validated in many fields including psychology, anthropology, economics, organizational behavior, systems thinking, neuroscience, and artificial intelligence.

When I first learned of the concept in the early 90s, I was enchanted. The alternative theory of mental logic, which reduces thinking to if-then rules and logical operators (and, or, not) never made sense. The brain is not a computer. Life is not math. And, all syllogisms are sillygisms, at the mercy of false premises (all men are rational, all swans are white) and not how we think. But this theory of mental models — our brains create internal representations and simulations of external reality for purposes of understanding and prediction — it resonated deeply with me.

It helped explain why we are so often wrong. Our models are good enough to be useful, yet we mistake them for truth. We think the earth is flat, electricity flows like water, dogs are friendly, mammals have four limbs, gender is a binary, and natural is safe. We infer generalizations from experience and hearsay. We invent categories to make the complex clear. And, lots gets lost in compression. Our mental models are suitable for survival, and yet the map is not the territory.

Personally, I found the concept of mental models to be useful throughout my 25 year career as an information architect. In order to design usable software and websites, while also satisfying my clients’ goals, I had to understand and shape the mental models of users and stakeholders.

Yet, in all this time, my every attempt to study the subject of mental models led to disillusion. On the one hand, I’d discover a book, only to find it rooted in the reductionist metaphor of brain as computer. On the other hand, I’d stumble upon sketches of mental models, where the insight set my mind aflutter, but the rendering was lifeless, like a beautiful butterfly, pinned to a board.

All the same, with respect to how the brain works, I remained curious as a cat. I pounced upon Thinking Fast and Slow in which Daniel Kahneman explains that System 1 is fast, unconscious, intuitive, and emotional, whereas System 2 is slow, conscious, deliberate, and analytical. And I tore into the work of Jeff Hawkins, who explains the brain uses memories to anticipate sensory input, constantly comparing predictions to reality and updating its models as errors occur; and that thousands of cortical columns in the neocortex each build complete models of objects using reference frames, with the brain voting on these models to achieve unified perception. It took a while for me to connect the dots, and then the ‘aha’ moment had me grinning like a Cheshire cat.

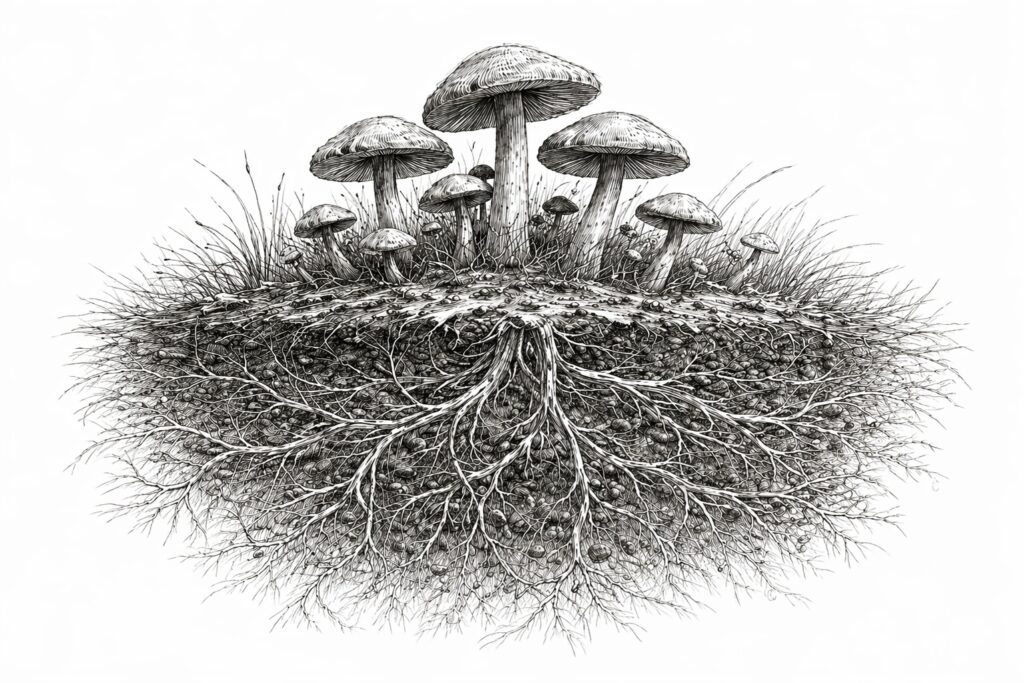

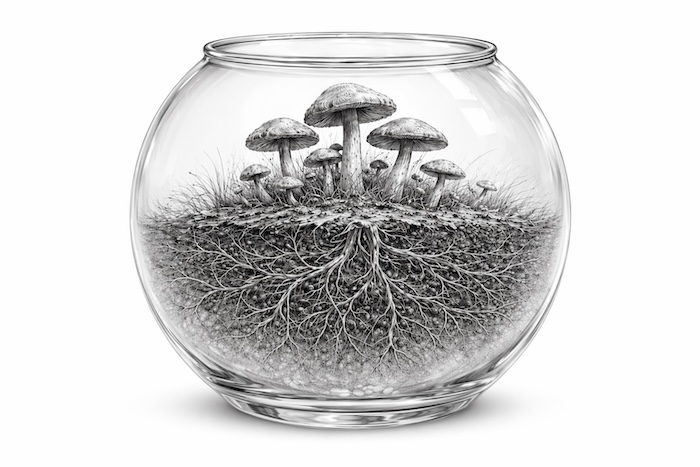

I realized it’s models all the way down. And this insight led to a mental model of mental models that I aim to explain. By way of analogy, let’s start on top with a mushroom. In the biological taxonomy, the mushroom was classified as a plant until 1969. And even today, that’s how most folks think. A mushroom grows out of the ground. It doesn’t move. At the grocery, it’s categorized as a vegetable. In the kitchen and in the forest, it’s natural to see the mushroom as an individual.

So, a mental model that’s been rendered as a sketch or in words is a mushroom. The model is visible, not hidden underground in the unconscious. The model stands alone, not part of a network or community. The model is stable, not dynamic. Best of all, it can be shared. Warren Buffett says, “Life is like a snowball. The important thing is finding wet snow and a really long hill,” and the mental model of compound interest slips from mind to mind, as if by telepathy.

Today, this outward and visible sign of a mental model gets all our attention. We celebrate the great mental models. We publish the best specimens in books. Our ability to share the wisdom of first principles, second-order thinking, and inversion is wonderful. And yet, in pinning the butterfly, her magic is lost — our fixation on the mushroom leaves fertile ground unexplored.

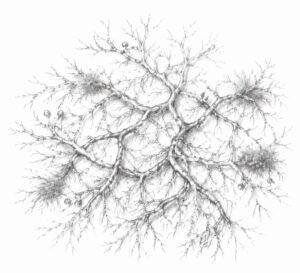

Mycelium is the vast underground network of thread-like filaments or hyphae that forms the main body of a fungus. In addition to water and nutrients, the network transmits information via chemical and electrical signals. A mycelial network integrates messages from thousands to millions of hyphal tips, each able to sense, learn, decide, act, communicate, and remember.

Similarly, the brain has an underground parallel processing network, named the unconscious, and it’s swarming with mental models. Neuroscience suggests that within the human brain are hundreds of thousands of cortical columns, each of which builds its own model of objects and concepts. Each column uses a unique reference frame to map the structure (e.g., 1-dimension for a timeline, 2-dimensions for a chessboard, 3-dimensions for a cat, n-dimensions for art). Perception is the consensus our columns find by voting and the basis for everything we know.

Our unconscious mental models are inscrutable. As the neocortex is divided by region, so too are our models fragmented by function (e.g., touch, sight, language). And we have no direct access. So we ignore them. We focus on mushrooms and forget the mycelia. Yet this tacit knowledge is responsible for most of what we think, say, and do. When I rescued the cat on a ladder with an ottoman, the idea came from below — our mycelial mental models are the soul source of insight.

Not unlike intuition, mushrooms arise seemingly out of thin air. In a forest, the morning after rain, you’ll find mushrooms you swear were not there yesterday. But, contrary to folklore, they are not the footprints of dancing fairies, and the way they “pop up” can be explained by science.

Underground, several days prior to emergence, hundreds of hyphae weave themselves together into a hyphal knot, which then pushes upwards to breach the surface. This hyphal knot is the first visible stage of a mushroom’s formation. To the human eye, it appears as a tiny, white dot. Then, often after rain, the mycelium absorbs water and rapidly inflates the cells of the fruiting body we call a mushroom, whose sole purpose is reproduction through the dispersal of spores.

Analogically, the hyphal knot is the first (barely) visible stage of a mental model. In between our conscious and unconscious thoughts, there exists a liminality uncharted by word or image. We experience tip-of-the-tongue feelings of relation, familiarity, meaning, anticipation, or rightness. William James says we ignore this elusive fringe of consciousness. And he’s right. As Pink Floyd sings, “When I was a child, I caught a fleeting glimpse, out of the corner of my eye, I turned to look but it was gone, I cannot put my finger on it now,” we are reminded of the observer effect. The act of observation changes the subject. Our unconscious mind is fertile ground for the hyphal knots of strange connections. We experience a felt sense of déjà vu or danger or a word that’s just out of reach, but if we turn to look, it’s gone; and yet, the knot is not invisible (barely).

In the shadowlands between mycelium and mushroom are hyphal knots of untapped possibility. If we peer through a looking glass, we may identify and tease apart these liminal mental models. What is the underlying structure? How is it organized? What’s the meaning of the categories and labels? It’s time to untangle our mental models by using the lens of information architecture.

Information Architecture

In 1991, I graduated with a degree in English. I had no plan. So I lived with my parents, worked odd jobs, and wallowed in misery. In my spare time, I taught myself to code, explored computer bulletin board systems (no public Internet), and searched for a career. One afternoon, browsing our public library, I discovered a dusty old book about careers in library science. And that book changed my life. I had an epiphany. I realized digital networks would need librarians; and this strange connection led me to library school and a 25 year career in information architecture.

The first definition of information architecture is, “the structural design of shared information environments.” It involves the design of organization, labeling, navigation, and search systems to improve the user experience of websites, software, diagrams, games, books, and buildings. An information architect may focus on findability, sensemaking, or placemaking across digital and physical environments. And, information architecture is the foundation of artificial intelligence.

A novel definition of information architecture is, “the design of mental models.” It involves the organization of ideas in your mind. What are your models and how are they structured and who forged your categories and connections, and when and where and why? And it begs the question — can you change your own mental information architecture? It matters because mental models shape everything we do. And, information architecture is the foundation of natural intelligence.

Let’s paws for a moment to revisit the tale of the orange cat. By way of backstory, it’s true that orange cats are known to be split personality: one cat is friendly, one cat is fierce. Often, I can lift Pippa into a warm embrace. But there are times when our orange cat is unhinged. She once did a flying cannonball into the toilet while I was using it. And in the dark, she hunts us like a lion, red in tooth and claw. That’s why my wife and daughter gave pause. On the ladder, our quantum cat existed in superposition. As observers, they were afraid to disturb her into predatory collapse.

In this story, there are three orange cats. Two live inside the individual, Pippa. One exists as the category of orange cat in your mind. This cat-egory is a prejudice. Objectively, it does not exist. There is no scientific evidence that orange cats all share one brain cell or behave differently. To mix metaphors, my cat tale is a trojan horse for mental models. Story is telepathy. It’s a way to share categories and mental models from mind to mind that’s both persuasive and memorable.

A mental model is a story that explains how a category works for the purpose of prediction. All three words (story, category, model) are entangled. Like two roads diverged in a yellow wood, it’s hard to tell the difference. All words are categories, and categories are tricky. The boundary is often fuzzy, and as we generalize by similarity for the sake of simplicity, we naturally obscure differences, distort reality, and incur bias. The dictionary is no help, since definitions are often recursive. A choice is a decision is a choice. And, words are handles for mental models. A cat is not only a category but also an invocation of our (un)conscious predictive knowledge networks.

Words are fingers pointing at the moon, but words are not essential. A cat lives and dies by the categories of predator and prey. To solve problems creatively, a cat needs no words. Nor do we. That’s why natural information architecture is concerned not only with words and images but also with the non-symbolic thinking-feeling at the fringe of consciousness that drives creativity.

Creative Problem Solving

Why was there an eight-foot step ladder in our basement? It’s a good question. To respond, let me tell you a story. One day, I noticed a puddle on our faux wood basement floor. I looked up, but the ceiling was dry. I was busy so chose to ignore the mystery. A few weeks later, I noticed soggy chunks of ceiling on the basement floor. I knew we had a leak and began to investigate.

I ran the overhead shower directly above. No leak. I used the wand to spray the bottom edges of the shower. The basement ceiling got wet. I called a plumber. He used our ladder (one mystery solved) and cut holes in the ceiling to expose the drain pipe. He ran the same tests I did with the exact same result. The floor tiles around the edges of your shower are leaking was his diagnosis.

So I hired a tile guy. Two weeks and several thousand dollars later, he was done. We waited a couple of days for the grout to dry, then ran our tests. The basement ceiling got wet. I called the tile guy. He swore by his work but agreed to investigate. He soon arrived with a bucket and an insight. He put the shower wand in the bucket and turned it on. The basement ceiling got wet.

The source of the leak was the wall-to-wand supply line. The plumber fixed it in thirty minutes. I didn’t say anything, but I was mad at him (and at myself) for making the wrong diagnosis and prescription based on a flawed mental model. We had literally failed to think outside the box.

How could I have avoided this costly mistake? How might I prevent similar errors in the future? Would it have helped to sketch my mental model, and then ask: what am I missing? Perhaps. In the practice of information architecture, insight is often inspired by making the invisible visible. But, honestly, I doubt I’ll have the patience or foresight to bring such rigor the next time around.

That’s the trouble with tactics. We don’t know which to use when. We rarely have time. And we don’t even know if they work. We might sketch or brainstorm, invert by asking how best to fail, or dissect the problem into first principles. But how often do we task our conscious minds with these exercises? The best ways are investigation and incubation. First, learn by hands-on inquiry and seek novel perspectives. Then, go for a walk or sleep on it. Let the unconscious figure it out.

Of course, not all men are creatively equal. While gender as a predictor is negligible, genetics is responsible for 30 to 60 percent; and environment shapes the rest. Attributes of creative people include openness to experience, associative thinking, and cognitive flexibility. Domain expertise and intrinsic motivation also play a role. So, creativity is not only a spark or gift, but also a skill, muscle, library, network, and garden. We grow stronger analogically when we cultivate the soil.

I saved the cat with an ottoman, because I had experience with both. The soil of my unconscious was rich with relevant mental models. I failed to solve the puzzle of the leaky shower thanks to a lifetime of shirking home repair. My mental models of plumbing are barren. Creativity tactics aren’t a realistic answer. There is no substitute for experience and multi-dimensional understanding. That’s why we hire experts. The plumber should have run the bucket test. He broke my trust.

Leaky showers and cats up ladders aren’t exotic. These problems are mundane and everyday. That’s why they matter. Because, while we can’t solve all puzzles, we can solve some, and little things add up. Each day, we juggle tasks — what to do, when, where, and how — in our minds. And our solutions are shaped invisibly by the mental models that we accumulate on the way.

If we view a decision as a fork (two roads diverged in a yellow wood), we may miss the option to hedge a bet or try an A/B test or run a side hustle. In a maze, fungi take all paths at once. At each crossroads, the hyphal tips split, until the mycelium maps the maze. Where the mycelium finds food, it reinforces that path and prunes the others. For mycelia, there is no road not taken.

I have made decisions in diverse contexts — as a founder of startups, consultant to corporations, writer, speaker, father, pickleball player, and animal sanctuary caretaker — and I have noticed a pattern of progression. A beginner learns vocabulary and taxonomy. The domain knowledge is explicit. Choices are made consciously and slowly. Mistakes are made often. But an expert relies on intuition. The wisdom is (mostly) tacit. Choices are insights, fast and (mostly) unconscious.

Over the past 25 years, my creative problem solving has improved. Experience plus mindfulness (via meditation and metacognition) refined my mental models and my habits. But that’s not all. I also credit my profession. The practice of information architecture changed my mind. Working with diverse taxonomies enhanced my ability to see things differently (and similarly). I realized categories are connections, analogies hide differences, and classification is the root of creativity.

Strange Connections

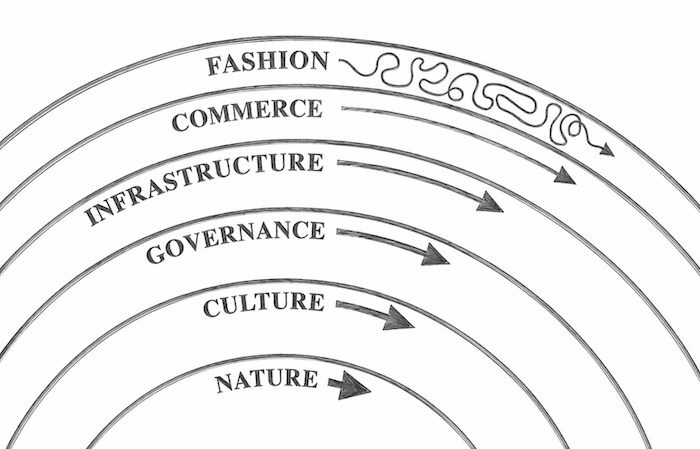

In Y2K, at the first Information Architecture Summit, in a Boston airport hotel, I gave a talk about strange connections, in which I used Stewart Brand’s pace layers and George Lakoff’s Women, Fire, and Dangerous Things — “there is nothing more basic than categorization to our thought, perception, action, and speech” — to frame information architecture as infrastructure.

In pace layering, fast gets all the attention yet slow has all the power. In software and websites, visual design moves fast and grabs the spotlight, while information architecture quietly endures. Similarly, in our minds, conscious thoughts take center stage, and obscure the source of insight.

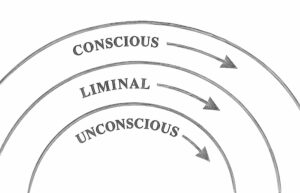

Our mental model of mental models includes, from bottom to top, the pace layers of unconscious (mycelium), liminal (hyphal knot), and conscious (mushroom). In the unconscious, mental models are distributed, fragmented by sense, and hidden. In the conscious, mental models are explicit and sharable. In between, just a twinkle at the absolute threshold of perception, our models are hints of entangled reflection and intuition, a liminality that’s ripe with possibility.

This classification of mental models (unconscious, liminal, conscious) is useful and misleading. It’s a way to lump and split diverse types of mental models, thus inspiring both unification and differentiation. But in defining categories, it obscures a truth — the layers are not independent.

Our model is missing a vital analogy. In the world, rain falls from the sky, soaks into the earth, gets absorbed by mycelium, surges upwards, and inflates the hyphal knot into a mushroom. In our model, the invisible force that works top-down and bottom-up is information architecture.

This is not obvious. Akin to culture, information architecture is like water to a fish, an unseen medium in which we exist. Our brains are wired to make categories of nature’s continuum. We see distinct bands of color in a rainbow. We are blinded by false binaries. We reify the rifts of us-them and self-other. Like a hyphal knot, categorical bias is nearly but not wholly invisible. In liminality, we may untangle the threads of information architecture that govern us bottom-up.

Of course, taxonomy swings both ways. We shape our categories; thereafter they shape us. In our culture, analogies rain down endlessly, drenching our minds with categories, connections, and mental models. In story, values and beliefs trickle deep into our unconscious, where they water the roots of racism and cultivate seeds of compassion. That’s why the practice of information architecture matters — because how we organize the world changes our minds.

0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

A chapter from Natural Information Architecture by Peter Morville